Why is your AI agent stack breaking this week?Are open-weight models finally beating the labs?Is enterprise AI moving from chatbots to autonomous workflows?📊 Framework/Model | Key Update | Strategic Impact❓ FAQ: Today's AI News Explained

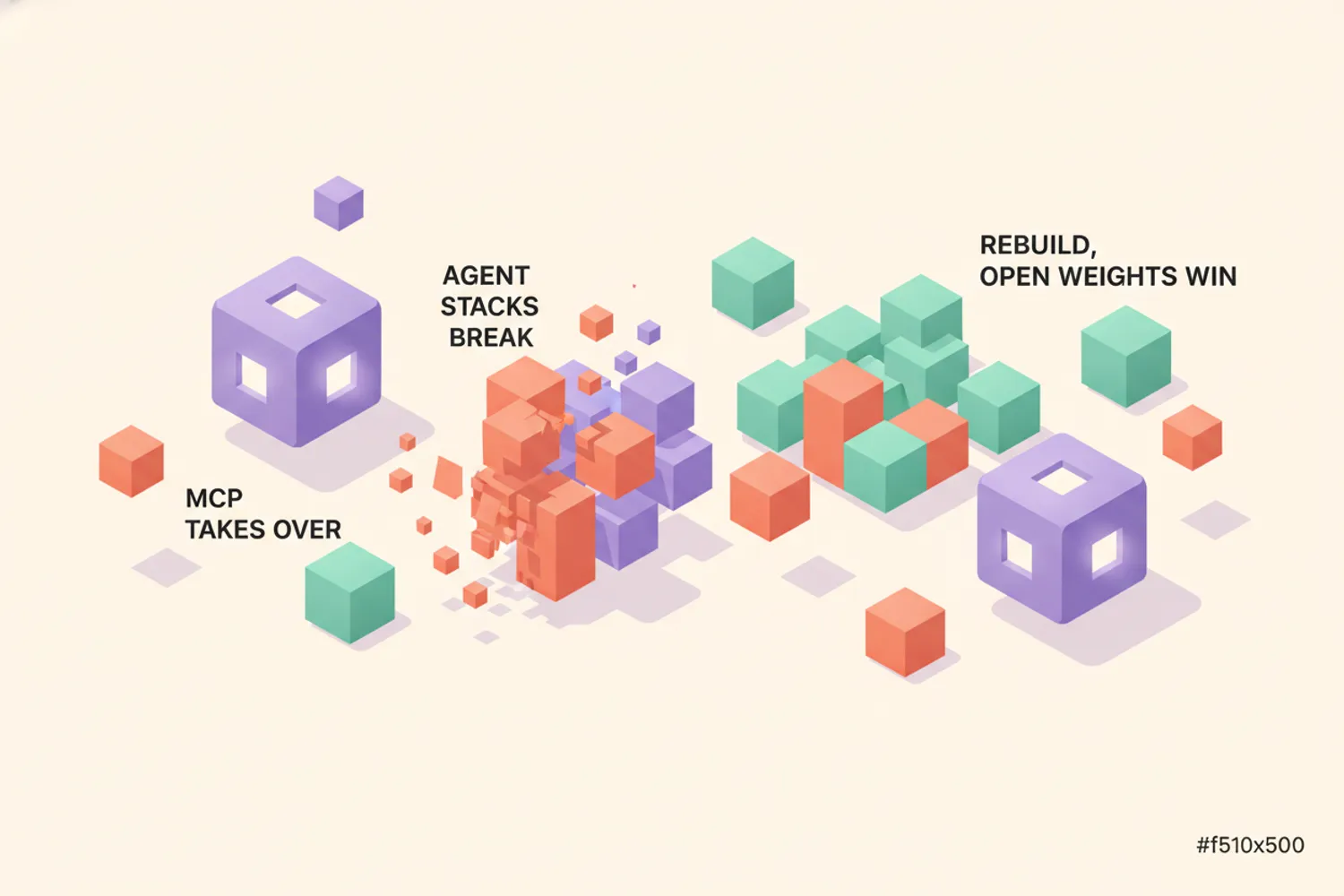

TLDR: The AI agent stack is undergoing a brutal but necessary refactor. OpenClaw v2026.4.10 and OpenAI Codex v0.120.0 just dropped breaking architectural changes, while the Model Context Protocol (MCP) quietly became the industry standard. Meanwhile, Google's Gemma 4 and Alibaba's Qwen 3.5 are proving open-weight models can outpace proprietary labs at half the cost. If you're building agents today, expect your toolchains to break, then rebuild faster.

Today is not a typical update cycle. It is a platform inflection point. The Model Context Protocol (MCP) has finally graduated from experimental spec to de facto interoperability layer, forcing every major player to adapt or get left behind. OpenClaw rolled out v2026.4.10, bundling Codex as a native provider with completely rewritten auth and model discovery paths. Simultaneously, OpenAI shipped Codex v0.120.0, pushing Realtime V2 background streaming and millisecond TUI timestamps. This means the era of fragile, single-model wrappers is over. We are moving toward deterministic, multi-agent runtimes that negotiate their own tool access. If your pipeline still relies on hardcoded API routes, you are already technical debt.

Why is your AI agent stack breaking this week?

The breaking changes are not random. They are the growing pains of a maturing ecosystem. Claude Code users are currently in crisis after the abrupt removal of the `/buddy` skill in v2.1.97, sparking fierce debates over whether recent Opus 4.6 token consumption spikes are masking actual reasoning degradation. The official response? Route smarter. The newly documented Claude Advisor tool now dynamically distributes tasks between Claude Opus, Sonnet, and Haiku to balance latency, cost, and quality. But routing alone does not fix architectural debt. That is why OpenCode is undergoing a massive migration to Effect-TS, merging dozens of facade PRs to finally stabilize its state management.

Here's the thing: The real winners this week are frameworks that abstract the chaos. NanoBot just pivoted to production-grade autonomy, adding Automatic Skill Discovery that lets agents self-improve by recognizing code patterns instead of waiting for manual plugins. Over in AWS land, the Hermes Agent framework dropped native Bedrock integration with enterprise migration tools specifically designed to replace legacy OpenClaw deployments. Meanwhile, IronClaw v0.25.0 hardened its ACP orchestration with strict WASM commitments, proving meta-agent frameworks can finally run outside sandboxed labs.

- MCP is the new USB-C: With activepieces natively integrating over 400 MCP servers and trycua/cua standardizing cross-platform Computer-Use Agent sandboxes, the protocol has won. Tool creators no longer need custom adapters. Just publish a MCP server and let agents discover it.

- GPT-5.4 is the target: OpenClaw's six-contract agentic runtime parity program now explicitly optimizes for GPT-5.4, driving massive architectural overhauls across the community to ensure model-agnostic compaction and native threads.

- Chinese enterprise stack consolidates: CoPaw is optimizing semantic skill routing and multi-agent meeting systems specifically for Feishu and Lark, while Manus Skills and the Viktor Skills Marketplace transform one-off workflows into portable, app-store-discoverable automation modules.

- Cost control is no longer optional: Entroly launched as an open-source token optimizer for Claude Code, Cursor, and Codex, while Moltis introduced deterministic compaction and agent-loop detection specifically to cap runaway API bills in production.

- UI and Memory are catching up: OpenClaw's Dreaming UI now features ChatGPT import ingestion and Memory Palace subtabs. Collabmem and mem0ai/mem0 are solving cross-session persistence, while SoulLink brings spatial gesture-based 3D vibe coding to mobile, bypassing traditional desktop constraints entirely.

Even the CLI wars are heating up. Gemini CLI dropped nightly v0.39.0 with critical UTF-8 fixes, XDG compliance, and a long-awaited `/enhance` command. Qwen Code pushed v0.14.3-nightly with tighter session management and Chinese localization patches. But the loudest outcry is reserved for GitHub Copilot CLI, facing severe community backlash over opaque billing and reliability regressions, with minimal developer response. If you are tired of the noise, local-first is the answer. Local-first AI tools running entirely in the browser are surging, proving developers will trade cloud convenience for privacy and zero API keys. And yes, Pi finally patched that critical 90GB RAM process leak and hardened its model registry, so update immediately if you run it locally.

Are open-weight models finally beating the labs?

Hot take: The big labs are scared of the quantization curve. Unsloth has systematically converted every major release to GGUF quantization, creating a de facto standard for accessible local inference. When you can run production-grade reasoning on consumer hardware, the $200/month API subscriptions look like legacy tax.

The model landscape is fracturing into vertical specialists and open-weight powerhouses. Google dropped the Gemma 4 family, and it is already dominating ecosystem adoption thanks to experimental any-to-any architecture variants that handle audio, text, and video in a single bidirectional pipeline. This is a direct shot at the proprietary moats. Alibaba's Qwen 3.5 is emerging as the preferred open-weight base for community fine-tuning, with Zhipu's GLM-5.1 following close behind with highly efficient MoE architecture and DSA optimization. The community did not wait for permission to push them further: Jackrong/Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled is currently trending, proving you can distill advanced reasoning from proprietary models into fully open architectures without the licensing headaches.

- Vertical AI is winning: shiyu-coder/Kronos specializes entirely in financial markets language, while nvidia/personaplex-7b-v1 delivers Moshi-based speech-to-speech with long-term persona persistence, finally making conversational voice agents viable for production.

- Generative video scales down: Waypoint-1.5 democratized real-time world generation by optimizing for everyday consumer GPUs, while netflix/void-model proves major studios are heavily investing in CogVideoX-based inpainting workflows for post-production.

- Voice cloning gets wild: OpenBMB/VoxCPM dropped a tokenizer-free multilingual text-to-speech model enabling high-fidelity cloning, while creator HauhauCS continues releasing aggressive uncensored fine-tunes, highlighting sustained demand for unfiltered local models despite ongoing licensing tensions.

- Education and research lower barriers: jingyaogong/minimind enables training a 64M-parameter GPT from scratch in just 2 hours, making LLM architecture accessible to students instead of just GPU-rich corporations.

- Security fears lag behind capabilities: Anthropic's new Mythos model release raised significant AI-powered hacking concerns, forcing the industry to confront dual-use risks head-on.

Underneath all this, the engineering community is shifting focus from raw parameter counts to architectural efficiency. Research on Transformers now prioritizes understanding attention mechanisms and optimizing inference latency over simply scaling model size. Tools like ONNX are seeing massive adoption for debugging transformer latency issues in production. Meanwhile, the Berkeley AI Agent Benchmark Critique exposed fundamental flaws in how we currently evaluate agents, arguing that static multiple-choice tests fail to capture real-world autonomy, planning, and tool-use reliability. If benchmarks lie, what do we measure next?

Is enterprise AI moving from chatbots to autonomous workflows?

Worth watching: Anthropic just launched Project Glasswing, a formal verification initiative designed to mathematically secure AI-generated code against vulnerabilities. If agents write production software, we need proofs, not just unit tests.

The consolidation wave is hitting enterprise AI hard. OpenAI quietly acquired Cirrus Labs, an AI infrastructure company, signaling that big players are buying compute pipelines instead of waiting for them to mature. This comes as OpenAI faces a looming $100B+ legal trial with Elon Musk, which could force unprecedented corporate restructuring. Meanwhile, Meta is preparing to award nearly $1 billion in bonuses to top AI executives, proving that talent retention in the model lab era requires generational compensation packages.

- RAG is getting rewritten: VectifyAI/PageIndex introduced a vectorless, reasoning-based RAG indexing method that challenges traditional embedding retrieval. Paired with microsoft/markitdown, the official Python utility solving dirty data bottlenecks in enterprise RAG pipelines, traditional chunking strategies are officially obsolete.

- Workflow orchestration matures: obra/superpowers brings strict software engineering discipline to AI-native development, while Audos 2.0 abstracts multi-business orchestration for micro-SaaS operators running parallel AI ventures.

- Finance gets AI-native: Google Finance launched an AI assistant leveraging proprietary data moats for real-time market reasoning, while Perplexity Finance aggregated fragmented accounts into a unified personal finance OS. Both are racing to own the financial context layer.

- Context fragmentation solved: Spine now synthesizes research across multiple SaaS apps to unify workplace context, while Minty applies structured AI career coaching to bridge the gap between basic chatbots and executive-level guidance.

- Creative and compliance tools scale: WM Studio lets marketing teams use brand-safe digital twins for campaign content, and LayerProof Chromo generates data-backed presentation slides with verifiable sourcing to kill enterprise hallucination concerns.

Even culture cannot escape the shift. The satirical game Hormuz Havoc was completely overrun by AI bots within 24 hours of release, sparking intense community debate on whether AI disruption is accelerating faster than game design can adapt. On the security side, GPTZero is facing heavy criticism for rampant false positives, prompting developers to build open-source Python detection alternatives. Finally, developers using Cursor are actively sharing .cursorrules patterns to improve reliability and reduce hallucinations, proving that prompt engineering at scale is becoming infrastructure code.

📊 Framework/Model | Key Update | Strategic Impact

- **OpenClaw v2026.4.10** — Bundled Codex provider, plugin-owned app-server, new auth paths — Replaces legacy OpenAI routes, sets standard for agentic runtimes

- **Gemma 4** — Experimental any-to-any multimodal pipeline — Proves open-weight models can handle cross-modal reasoning natively

- **Project Glasswing** — Formal verification for AI-generated code — Shifts security from heuristic testing to mathematical proofs

- **MCP Ecosystem** — De facto standard with 400+ native integrations — Eliminates custom adapter development, accelerates agent tooling

- **VectifyAI/PageIndex** — Vectorless, reasoning-based RAG — Challenges embedding dominance, improves retrieval accuracy

❓ FAQ: Today's AI News Explained

- Q: What broke in Claude Code v2.1.97 and how do I fix it? — The `/buddy` skill was abruptly removed, causing workflow failures. The immediate fix is to adopt the Claude Advisor tool for dynamic model routing between Opus, Sonnet, and Haiku, or downgrade to v2.1.96 until a compatible replacement lands.

- Q: Is MCP actually a standard or just hype? — It is a standard now. Over 400 servers integrate natively via activepieces, and frameworks like OpenClaw and Codex have dropped custom adapters in favor of MCP discovery. If you build tools, publish a MCP server.

- Q: Why is Qwen 3.5 outperforming proprietary models? — It is not the base model alone. Community distillations like Jackrong/Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled extract advanced reasoning patterns, and Unsloth/GGUF optimizations make it deployable on consumer hardware at 1/10th the inference cost.

- Q: What is Project Glasswing and why does it matter for developers? — Anthropic's Project Glasswing applies formal verification to AI-generated code. It matters because it shifts security from post-deployment patching to pre-generation mathematical guarantees, drastically reducing production vulnerabilities.

- Q: Are traditional RAG pipelines dead after VectifyAI/PageIndex? — Not dead, but forced to evolve. Vectorless reasoning-based indexing outperforms embeddings in complex, multi-hop queries. Pair it with microsoft/markitdown for clean ingestion, and you will see significantly higher retrieval accuracy with lower overhead.

🔮 Editor's Take: The era of wrapping a single API call and calling it an AI product is dead. We are entering the age of deterministic, self-healing agent runtimes that negotiate their own toolchains, verify their own code, and run on open weights you can actually deploy. If you are still treating AI like a magic chatbot, start treating it like distributed infrastructure. The stack is breaking so it can scale.